Looking back on the progress that the cybersecurity industry made in 2018, I remain optimistic that advancements will continue over the next year ahead. But there were some big misses that the industry made where we all share some accountability.

From my perspective, there were some unforced errors in 2018 that have continued to plague our industry. What does this mean going into 2019? Read on…

Apathy

We hear about data breaches almost everyday so it’s no surprise that cyber fatigue is plaguing consumers, government and enterprise alike. In fact, a recent survey found that one in three government employees believed they were more likely to be struck by lightning than have their work data compromised.

Government and enterprise have created an environment and a culture that is nothing short of numbing to the public. We remain indifferent until we hear about cyber attacks like the latest “Collection #1,” which has exposed a record breaking 773 million email addresses and 21 million passwords. Perhaps a breach like this will awaken consumers to DEMAND more from the businesses and organizations who hold personal information with such clear disregard.

Lip Service

Over the course of my travels this past year, I’ve had the opportunity to hear some of the smartest people in the business talk about cybersecurity. The themes are all the same – huge growth, big problem, critical need, market demand, essential to our future, and investment in all kinds of time and money to solve the problem.

This is not isolated to just consulting firms (we expect them to be hyperbolic), but some of the leading technology companies in a position to directly impact the industry in a huge way. These big players have slideware that is impressive, spectacular even, yet it’s still just talk. I contend the single most common element of all this talk is simple – “the problem is big, and you better pay attention. However, I have no practical solutions for you today, but don’t worry we are working on it.”

Cybersecurity Needs to Show Business Value

Apathy and lip service are just a few of many key drivers that affect any culture. But wait, there’s more. The reality is evident in the facts. Since the CISO is still a fairly new position, they rarely are invited to a seat in the boardroom or even report to the CEO. When it comes to budget, holiday celebration expenditures have a better chance of getting approved than the newest cybersecurity tool.

So what can any good organization do to address these issues while we wait for the public to assemble and protest? They make a change. That means elevating cybersecurity to demonstrate itself as a profit center that demonstrates measurable business value. This is the opportunity we must work to embrace.

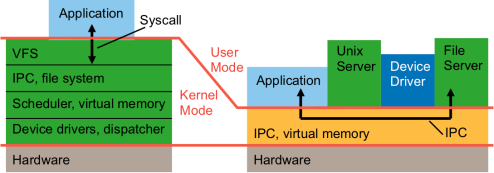

Here at Cog, our tagline is all about security via the virtualization of IoT. Yet, our customers find the value in the measurable ROI that they incur through the use of our technology, and security just comes along for the ride. The approach to security must be proactive and demonstrate real value to the business by minimizing risk, reducing cost and improving performance. All of this leads to company profit, which is how the CISO earns a seat in the boardroom. If we do that, then we can break through the apathy and lip service that has become our new reality.

In spite of it all, I still have nothing but optimism for the future. It’s a good time to be bold and elevate cybersecurity to a new level that will eventually change the industry and the world for the better.